Blackboard Pattern

Over the last several months, I worked with a distributed system implemented based on blackboard pattern, and come across quite a lot of bugs difficult to wrap my head around. I think these problems can all be traced back to the basic idea of this design pattern.

Originated from the early stage of the AI system in the 1970s, the idea behind the pattern is to divide a hard problem without deterministic solution into smaller scope solvable problems.

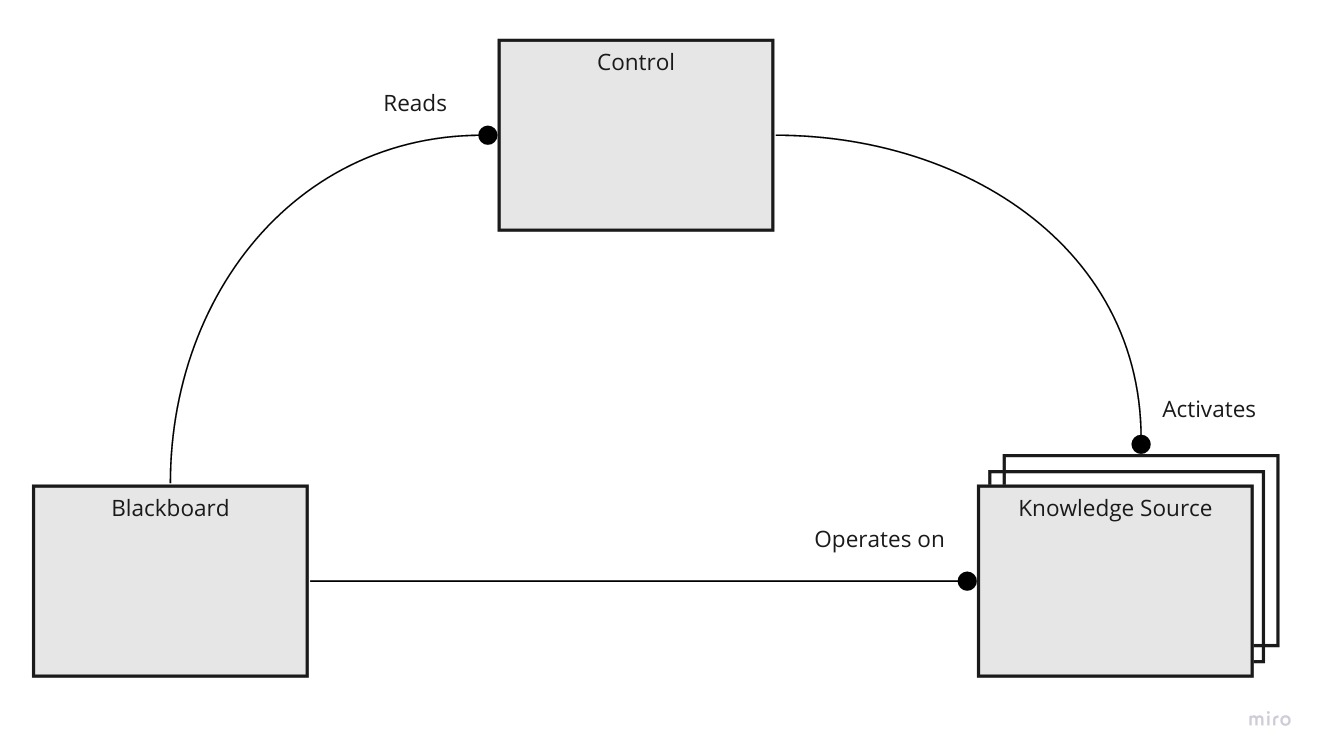

There’re three main concepts in this pattern

- blackboard: datastore shared between the other components

- knowledge sources: a group of systems, each focus on solving a small scale problem individually

- control component: a central place decide which knowledge source to execute on a given time, to solve the full problem space

The structure can be visualized like this:

Each knowledge sources focus on a specific problem, normally utilize part of whole information from the blackboard. Usually, each of the subproblems is solvable in a deterministic way. The knowledge sources are also going to expose the condition of the blackboard that this knowledge source can be triggered.

The control component keeps reading the state of blackboard, checking against each knowledge source to select the next knowledge source to execute.

At first look, this pattern is easy to understand and feels elegant. Each knowledge source can be implemented independently, without direct interaction with other knowledge sources. On the other side, the control component could also be implemented to be agnostic to knowledge sources and relies only on the condition part from knowledge sources to make control decisions.

However, this seemingly elegancy comes with the cost of whole system behavior become either non-deterministic or hard to reason about. It is because this pattern essentially hides dependencies between knowledge sources and control components. As all of the knowledge sources read and write from the same data source (blackboard), even knowledge sources don’t directly contact each other, it does impact the behaviors of other knowledge sources through the shared data on the blackboard.

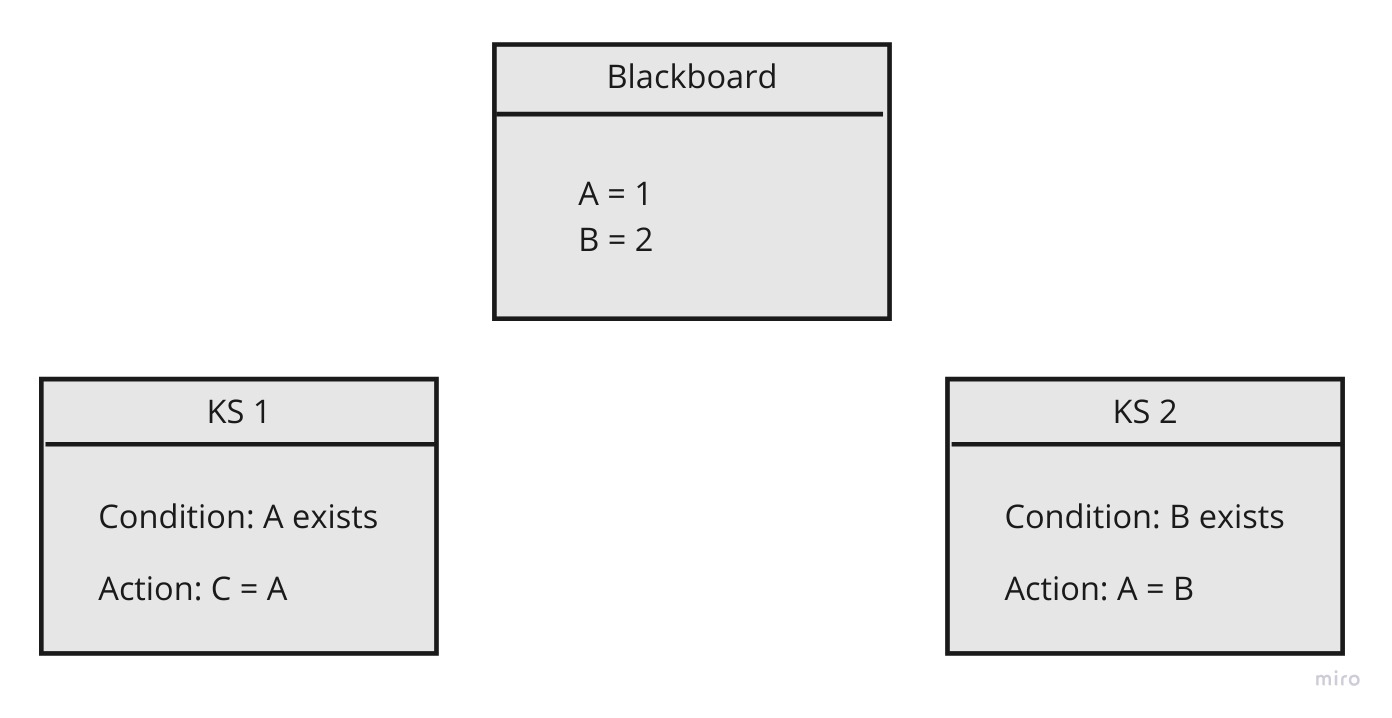

In this pattern, the logic of the system embeds in the output of knowledge experts, the check condition of knowledge sources and the logic built into the control component. Here’s a simple example

Both knowledge sources check condition will pass, but depend on the order of KS1 and KS2 executes, the value of C would be different. From the prospect of each knowledge source, they don’t depend on the other, but the system behavior varies on the order they run. If we have more of these kinds of knowledge sources, with even convoluted relation on execution order, the system can easily grow into an unmanageable state.

Emerged from the last wave of AI research, this pattern was useful to combine multiple knowledge sources with heuristic rules in the control component to find an approximation solution. It’s similar to how neural net finding approximation with linear functions. However, a machine learning system and software engineering system have different focus and principles. For problems with a determined solution, the system would require more on testability, easy to debug and make changes. For this reason, apply patterns suitable for machine learning problems to traditional software systems often would not work well.